Abstract

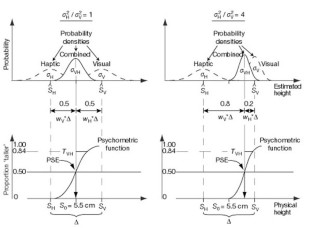

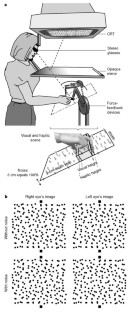

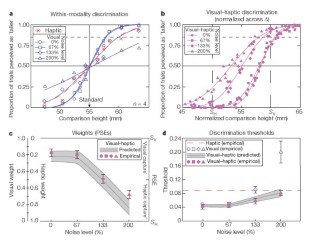

When a person looks at an object while exploring it with their hand, vision and touch both provide information for estimating the properties of the object. Vision frequently dominates the integrated visual–haptic percept, for example when judging size, shape or position1,2,3, but in some circumstances the percept is clearly affected by haptics4,5,6,7. Here we propose that a general principle, which minimizes variance in the final estimate, determines the degree to which vision or haptics dominates. This principle is realized by using maximum-likelihood estimation8,9,10,11,12,13,14,15 to combine the inputs. To investigate cue combination quantitatively, we first measured the variances associated with visual and haptic estimation of height. We then used these measurements to construct a maximum-likelihood integrator. This model behaved very similarly to humans in a visual–haptic task. Thus, the nervous system seems to combine visual and haptic information in a fashion that is similar to a maximum-likelihood integrator. Visual dominance occurs when the variance associated with visual estimation is lower than that associated with haptic estimation.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Rock, I. & Victor, J. Vision and touch: An experimentally created conflict between the two senses. Science 143, 594–596 (1964).

Hay, J. C., Pick, H. L. Jr & Ikeda, K. Visual capture produced by prism spectacles. Psychonomic Sci. 2, 215–216 (1965).

Warren, D. H. & Rossano, M. J. in The Psychology of Touch (eds Heller, M. A. & Schiff, W.) 119–137 (Erlbaum, Hillsdale, New Jersey, 1991).

Power, R. P. The dominance of touch by vision: Sometimes incomplete. Perception 9, 457–466 (1980).

Welch, R. B. & Warren, D. H. Immediate perceptual response to intersensory discrepancy. Psychol. Bull. 88, 638–667 (1980).

Lederman, S. J. & Abbott, S. G. Texture perception: Studies of intersensory organization using a discrepancy paradigm, and visual versus tactual psychophysics. J. Exp. Psychol. Hum. Percept. Perform. 7, 902–915 (1981).

Heller, M. A. Haptic dominance in form perception with blurred vision. Perception 12, 607–613 (1983).

Clark, J. J. & Yuille, A. L. Data Fusion for Sensory Information Processing Systems (Kluwer Academic, Boston, 1990).

Blake, A., Bülthoff, H. H. & Sheinberg, D. Shape from texture: Ideal observer and human psychophysics. Vision Res. 33, 1723–1737 (1993).

Landy, M. S., Maloney, L. T., Johnston, E. B. & Young, M. Measurement and modeling of depth cue combination: In defense of weak fusion. Vision Res. 35, 389–412 (1995).

Gharamani, Z., Wolpert, D. M. & Jordan, M. I. in Self-organization, Computational Maps, and Motor Control (eds Morasso, P. G. & Sanguineti, V.) 117–147 (Elsevier, Amsterdam, 1997).

Knill, D. C. Discrimination of planar surface slant from texture: Human and ideal observers compared. Vision Res. 38, 1683–1697 (1998).

Backus, B. T. & Banks, M. S. Estimator reliability and distance scaling in stereoscopic slant perception. Perception 28, 417–442 (1999).

van Beers, R. J., Sittig, A. C. & Denier van der Gon, J. J. Integration of proprioceptive and visual position information: An experimentally supported model. J. Neurophysiol. 81, 1355–1364 (1999).

Schrater, P. R. & Kersten, D. How optimal depth cue integration depends on the task. Int. J. Comp. Vis. 40, 71–89 (2000).

Gibson, J. J. Adaptation, after-effect, and contrast in the perception of curved lines. J. Exp. Psychol. 16, 1–31 (1933).

Festinger, L., Burnham, C. A., Ono, H. & Bamber, D. Efference and the conscious experience of perception. J. Exp. Psychol. 74 (4), 1–36 (1967).

Singer, G. & Day, R. H. Visual capture of haptically judged depth. Percept. Psychophys. 5, 315–316 (1969).

Tastevin, J. En partant de l’experience d’Aristote. L’Encephale 1, 57–84 (1937).

Mon-Williams, M., Wann, J. P., Jenkinson, M. & Rushton, K. Synaesthesia in the normal limb. Proc. R. Soc. Lond. B 264, 1007–1010 (1997).

Pavani, F., Spence, C. & Driver, J. Visual capture of touch: out-of-the-body experiences with rubber gloves. Psycholog. Sci. 11, 353–359 (2000).

Heller, M. A. Visual and tactual texture perception: Intersensory cooperation. Percept. Psychophys. 31, 339–344 (1982).

Banks, M. S. & Backus, B. T. Extra-retinal and perspective cues cause the small range of the induced effect. Vision Res. 38, 187–194 (1998).

Ernst, M. O., Banks, M. S. & Bülthoff, H. H. Touch can change visual slant perception. Nature Neurosci. 3, 69–73 (2000).

Peña, J. L. & Konishi, M. Auditory spatial receptive fields created by multiplication. Science 292, 249–252 (2001).

Acknowledgements

We thank M. Landy for comments on the manuscript; and H. Ernst, X. Moncada, C. Alderson and S. Kashiwada for participating as observers. This research was supported by research grants from Air Force Office of Scientific Research and the National Institutes of Health, and by an equipment grant from Silicon Graphics.

Author information

Authors and Affiliations

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Rights and permissions

About this article

Cite this article

Ernst, M., Banks, M. Humans integrate visual and haptic information in a statistically optimal fashion. Nature 415, 429–433 (2002). https://doi.org/10.1038/415429a

Received:

Accepted:

Issue Date:

DOI: https://doi.org/10.1038/415429a

This article is cited by

-

NSF DARE—transforming modeling in neurorehabilitation: a patient-in-the-loop framework

Journal of NeuroEngineering and Rehabilitation (2024)

-

Memory reports are biased by all relevant contents of working memory

Scientific Reports (2024)

-

Vibrotactile enhancement of musical engagement

Scientific Reports (2024)

-

A primary sensory cortical interareal feedforward inhibitory circuit for tacto-visual integration

Nature Communications (2024)

-

Visual effects on tactile texture perception

Scientific Reports (2024)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.