Abstract

Purpose:

Broadening access to genomic testing and counseling will be necessary to realize the benefits of personalized health care. This study aimed to assess the feasibility of delivering a standardized genomic care model for inherited retinal dystrophy (IRD) and of using selected measures to quantify its impact on patients.

Methods:

A pre-/post- prospective cohort study recruited 98 patients affected by IRD to receive standardized multidisciplinary care. A checklist was used to assess the fidelity of the care process. Three patient-reported outcome measures—the Genetic Counselling Outcome Scale (GCOS-24), the ICEpop CAPability measure for Adults (ICECAP-A), and the EuroQol 5-dimension questionnaire (EQ-5D)—and a resource-use questionnaire were administered to investigate rates of missingness, ceiling effects, and changes over time.

Results:

The care model was delivered consistently. Higher rates of missingness were found for the genetic-specific measure (GCOS-24). Considerable ceiling effects were observed for the generic measure (EQ-5D). The ICECAP-A yielded less missing data without significant ceiling effects. It was feasible to use telephone interviews for follow-up data collection.

Conclusion:

The study highlighted challenges and solutions associated with efforts to standardize genomic care for IRD. The study identified appropriate methods for a future definitive study to assess the clinical effectiveness and cost-effectiveness of the care model.

Genet Med advance online publication 02 March 2017

Similar content being viewed by others

Introduction

High-throughput molecular approaches have rapidly moved from the research arena into direct clinical care and are a powerful demonstration of the implementation of biomedical research. Such approaches have enormous potential across all aspects of medicine to improve the effectiveness of molecular diagnosis and increase the power and potential of personalized approaches to health care. Demonstrable impacts on diagnostic rates and treatment have already been shown across a broad range of specialties.1,2,3,4

To achieve widespread implementation of genomic care, it will be necessary to alter care pathways to incorporate early genomic testing and then expand the delivery of genetic and genomic care beyond clinical genetics and into mainstream clinical specialties.5,6,7 A recent review of genetic service models has suggested that multidisciplinary clinics and coordinated services are key to delivering proper care for rare genetic disorders.8 Therefore, the delivery of integrated genomic approaches will require significant alterations in multidisciplinary workforce planning and training.9 Furthermore, because it will inevitably impact commissioning and payment, there is a compelling need to establish whether new working practices are feasible, acceptable to patients, and represent value for money.10

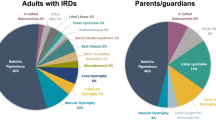

Inherited retinal dystrophies (IRDs) are a major cause of blindness among children and working-age adults,11,12 with 1 in every 3,000 people affected.13 IRDs are heterogeneous in genetic cause, mode of inheritance, and phenotypic expression. Currently, there is no effective way of arresting or reversing the resultant sight loss, although novel therapeutic strategies for certain forms of IRD are in development.14 There are no gold-standard recommendations for how best to provide genetic ophthalmology services for IRD, which can comprise genetic counseling, risk assessment, risk communication, genetic testing, information provision, and physical examination. Until now, a lack of clear guidelines regarding how to deliver clinical and diagnostic services for IRD has resulted in variation in practice across the United Kingdom.5,15 Approved genetic-based diagnostic tests for IRD have been nationally available for more than 10 years, but audit data provide evidence of geographical inequity of access.15 As an example of a “complex intervention” (one with several interacting components),16 special challenges are raised for evaluators, including how to standardize its design and delivery.17 A standardized care model for people with suspected IRD could, in theory, enable consistency of service provision to address such variations.

A care model (see Figures 1 and 2 ) was developed in response to a stated need by patients with IRD and as a result of qualitative research that explored these needs18,19 using the Kellogg Logic Model Development Guide.20 The care model was implemented at multidisciplinary clinics at a single regional genetics center by ophthalmologists (for eye examinations, diagnosis, and clinical management), genetic counselors (to provide counseling support and convey genetic information), and eye clinic liaison officers (to provide further practical and emotional support). Care was provided at multidisciplinary clinics to ensure that consultations were not delayed by the need to refer elsewhere, that patients did not need to travel to meet with different specialties, and that communication between specialties was improved (as it could happen face-to-face in the clinic).

Provision of the integrated care model for inherited retinal dystrophies. aIncluding examination, OCT, and ERGs. bIncluding information regarding treatment and management. CVI, certificate of vision impairment; ERG, electroretinogram; OCT, optical coherence tomography; PIP, personal independence payment; VI, visual impairment.

The aim of this study was to assess the fidelity of delivering the standardized care model and the feasibility of using selected measures to quantify its impact on patients and health care resource use. The study would inform a future definitive study to assess the clinical effectiveness and cost-effectiveness of the care model.

Materials and Methods

This study used a pre-/post- design to understand the potential impact of the standardized care model using selected measures of outcome and health care resource use.

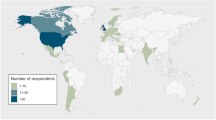

Patient population

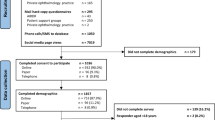

The eligible patient population for the study was defined as any adult patient accessing the standardized care model during the allocated recruitment period (22 November 2013 to 28 November 2014). This population included both existing and new users of the service. Patients were eligible for inclusion if they were referred for a suspected IRD and if they were 18 years or older on the date of the clinic visit. Participants were ineligible for inclusion if written informed consent could not be obtained or if they were unable to complete patient-reported outcome measures (PROMs) due to learning difficulties or insufficient English language skills. Potential study participants were identified by a genetic counselor as eligible for recruitment prior to attending the appointment, sent a study information sheet, and then recruited by a researcher based in the reception area of the clinic whose purpose was to obtain informed consent and administer the PROMs before patient consultations.

Fidelity

In a typical appointment, the patient would see an ophthalmologist, a genetic counselor, and an eye clinic liaison officer. A manual checklist was attached to the front of each patient file, which followed the patient as he or she moved between the different specialties. The checklist comprised six key areas covering various elements of the consultation process: diagnosis and management, provision of clinical information, provision of research information, decision making, counseling and communication, and practical support. Clinicians worked together to provide care in these six key areas, which were the appointment deliverables outlined in Figure 2 . All members of the multidisciplinary team were asked to update the checklist after each consultation with a recruited patient as a mechanism for confirming the fidelity of delivering a standardized care model. Clinicians were asked to record the time spent on each element in the care model and whether patients were new to the service. Clinicians also indicated, using a checkbox, whether the patient was provided with a personalized follow-up plan.

Ten appointments were recorded on video and independently assessed afterward to judge clinicians’ adherence to the care model and the accuracy of the completed checklist. Clinicians and patients consented to being recorded and evaluated.

Outcome measures

Three PROMs were administered in this study: the 24-item Genetic Counselling Outcome Scale (GCOS-24), the ICEpop CAPability measure for Adults (ICECAP-A), and the three-level version of the EuroQol five-dimensional questionnaire (EQ-5D-3L).

The selection of the GCOS-24 was informed by a previous program of work on how to value outcomes of clinical genetics services.21,22,23 This work pointed toward the need for a broader evaluative scope in assessing the benefits of clinical genetics services, which, as complex interventions, have broader objectives than only change in health status. The GCOS-24 was developed and validated to measure the patient benefits from clinical genetics services.24 Specifically, the 24-item scale can be used to measure changes in “empowerment” levels for patients who receive genetic counseling and/or testing and captures patient benefits conceptualized as perceptions of control, hope for the future, and emotional regulation relating to the genetic condition in the family. Responses to GCOS-24 questions are given on a Likert scale from 1 to 7 (strongly disagree to strongly agree); 4 is a neutral response. A completed GCOS-24 questionnaire yields scores between 24 and 168; higher scores are preferable.

The ICECAP-A was identified as a relevant measure specifically in this patient population through qualitative face-to-face interviews with patients with IRD.18,19 The ICECAP-A was designed to measure a concept called “capability” for use in economic evaluation.25 Its development was theoretically grounded in work by economists who argued that an important aspect of outcome measurement should focus on what people are capable of doing, as opposed to only health status.26 The ICECAP-A covers five domains (attachment, stability, achievement, enjoyment, and autonomy), and its UK scoring tariff can be used to convert responses to scores between 0 and 1, where 0 represents “no capability” and 1 represents “full capability.”27 ICECAP-A domains have four levels; higher levels indicate greater capability for a given domain. The ICECAP-A has exhibited desirable validity and acceptability in the general population.28 Qualitative work suggested that the concept of “autonomy” is particularly important for people diagnosed with inherited eye conditions,18 which is included as a domain in the ICECAP-A measure. Measures of capability could, in theory, also capture the impact of being able to make an informed decision, which has been identified as a core goal for clinical genetics services.23

The EuroQol EQ-5D (3-level version) was included because it is a widely used, validated measure of health status recommended for use to capture benefit in cost-effectiveness analyses.29,30 The EQ-5D-3L covers five domains (mobility, self-care, usual activities, pain/discomfort, and anxiety/depression), and completion of the EQ-5D yields a descriptive health state. The EQ-5D UK scoring tariff can then be used to convert health states to “utility” scores between −0.594 and 1; negative scores are considered “worse than death” and 1 represents “full health.”31 Previous work has suggested that health status is unlikely to be improved by clinical genetic services when patients cannot be offered an active treatment.23 However, it was still considered important to include this measure to provide empirical evidence regarding whether an intervention for IRD could have an impact on health status.

Resource use

A resource-use questionnaire was used to determine the services that patients accessed over the month prior to interview and the number of times each of these services was accessed. The questionnaire was designed for assisted completion (at baseline) and telephone interview (at follow-up). The questionnaire was based on the Client Service Receipt Inventory32 and was adapted to take account of the health-care services likely to be used by people with, or at risk of, vision impairment.

Data collection

Data were collected at baseline (defined as the day of the clinic but before the patient consultations) and at 1 and 3 months after baseline. All three PROMs and the resource-use questionnaire (in paper format) were completed by patients in the presence of a researcher in the clinic at baseline and then followed up by telephone interview at 1 month and 3 months after the clinic visit. All written materials were made available in large-print format to promote the inclusion of people with visual impairment.

Statistical analysis

The fidelity of the standardized care model was assessed by quantifying the average time spent by clinicians delivering each of the six defined elements. The feasibility of the PROMs was assessed by identifying ceiling/floor effects and the completion rates for each questionnaire. Descriptive analyses of average PROM scores and costs at the three time points were also undertaken. Changes in PROM scores at the 3-month follow-up were calculated with 95% confidence intervals and standard errors to enable power calculations for a future study, although some authors have cautioned against the use of pilot studies to inform power calculations.33 All statistical output was produced using Stata (V.13.1, StataCorp, College Station, TX).

A ceiling effect is observed when a considerable proportion of subjects respond with the highest possible score for a given measure.34 A floor effect is observed when a considerable proportion of subjects respond with the lowest possible score for a given measure.34 Ceiling/floor effects mean that the measure is unable to show improvements/declines in patient outcomes at the extremes of the measure’s scale. We looked at the proportion of responses with the lowest and highest possible scores for each measure. We compared these proportions to a commonly used threshold (15% of responses)35 to confirm or rule out the presence of ceiling/floor effects.

Each PROM was analyzed in accordance with standard practice for the individual measure. GCOS-24 questions that were marked as not applicable (NA) were recoded as the neutral response (4), as per the instructions at the top of the questionnaire. To ensure that 7 indicated the best scenario and 1 the worst, responses to questions 4, 5, 10–13, 17, 18, 21, and 22 were reversed. GCOS-24 scores were calculated as the sum of the responses. Each ICECAP-A response has a corresponding value in a published UK tariff and ICECAP-A scores were generated by the summation of these values.27 For the EQ-5D, each individual was assigned a score of 1, and then the UK tariff set of decrements were applied for domains in which respondents indicated they had problems.31

The appropriate study population may not include patients who already had some history of care from the genetic eye clinic at baseline. Therefore, our analysis of PROM scores considered all patients collectively, as well as a predefined subgroup analysis of patients who were new to the service at baseline.

Average PROM scores were calculated using both complete-case (CC) analysis and multiple imputation (MI). CC analysis includes only patients with complete data at all time points for a given PROM. MI is a technique to impute missing data and is widely advocated as an improvement over CC analysis because it makes use of available data that would otherwise be discarded.36 MI is also considered to be less biased than CC when data are missing at random. Mann-Whitney U-tests confirmed that baseline PROM scores did not significantly differ between patients who had missing data at follow-up visits and those who did not. MI was conducted to reflect the methods that would be used in the future study analysis. For imputation, PROM scores at each follow-up were modeled by linear regressions with the following variables: baseline score (for the respective measure), age, sex, and travel time to clinic. To impute missing GCOS-24 scores at baseline, the baseline score was not used as an independent variable in the regression. The number of imputations were sufficient if they were greater than 100 times the largest fraction of missing information, which is an accepted “rule of thumb” for multiple imputation.37 Final estimates were the means of the imputed data sets. Rubin’s rules were applied to correct the measures of uncertainty.

Aggregated resource-use data were combined with unit costs to find average resource use at each time point. Unit costs for National Health Service (NHS) services were obtained from published NHS reference costs38 and the Personal Social Services Research Unit (PSSRU) unit costs of health and social care.39

Ethics approval

The study was approved by NRES Committee North West–Haydock (reference 13/NW/0590).

Results

Patient characteristics

A total of 104 potential study participants were approached at baseline, of whom 6 chose not to participate because they did not wish to complete questionnaires. Ninety-eight patients received the standardized care model and consented to participation in the study. The mean age was 43.6 years; 58 women and 40 men were recruited. At baseline, 46 patients were classified as “new patients” accessing the service for the first time. Baseline patient characteristics and data pertaining to the feasibility of using each PROM are presented in Table 1 .

Patient-reported outcomes

To assess the feasibility of using each PROM to quantify the impact of the care model, we explored rates of missingness, ceiling/floor effects, and changes in PROM scores over time.

Table 1 shows that the rates of missingness at 1 and 3 months were 38% and 34%, respectively, for the GCOS-24. GCOS-24 data were also missing for 12 patients at baseline because patients did not complete at least one question. Some patients stated that GCOS-24 items were “not applicable” (NA) to them. To facilitate analysis, these items were recoded to the neutral response to comply with the instructions of the questionnaire. Supplementary Table S1 online shows how many GCOS-24 questions were considered as NA by patients. Questions were often marked as NA if they related to the impact on the patient’s children or future children (Q3, Q13, Q17, Q19, Q21, and Q24). Other questions that were NA related to knowledge about available options and the ability to explain one’s condition to others and at-risk family members (Q10, Q15, Q16, and Q18).

The rates of missingness for the ICECAP-A and EQ-5D were equal: 33% and 34% at 1 and 3 months, respectively. There were no commonly missed items in the ICECAP-A or EQ-5D because the questionnaires were either fully completed or not completed at all for these measures. Data were missing in these measures because patients either were not contactable or did not want to complete PROMs at a given follow-up.

No respondents gave the highest possible score for the GCOS-24, and no respondents reported the lowest possible score for any of the three measures.

The ceiling effect threshold of 15% was not met for the ICECAP-A at any time point; however, there were still considerable amounts of responses with the highest possible score (14% at baseline, 11% at 1 month, and 13% at 3 months).

The proportion of EQ-5D responses at the highest possible score exceeded the specified threshold to confirm the presence of ceiling effects at all three time points (34% at baseline, 23% at 1 month, and 31% at 3 months). This meant that the EQ-5D was unable to detect potential improvements in health status from baseline for 34% of the sample.

Although ceiling effects in a measure indicate that an individual’s responses to every domain were simultaneously at the highest scoring level, it was also of interest to investigate which domains were most commonly scored at the highest level by respondents. Further investigation found that the EQ-5D domain to which respondents most frequently indicated having no problems was “self-care” (80% of respondents at baseline, complete case). Similarly, the ICECAP-A domain to which respondents most frequently indicated having the highest capability was “attachment” (64% of respondents at baseline, complete case), which considers the individual’s ability to have love, friendship, and support.

Table 2 presents average PROM scores at each time point for all patients (n = 98) and new patients (n = 46) as results of CC and MI analysis. The study was inadequately powered to conclude, using measures of statistical significance, that the scores of the measures had improved by the 3-month follow-up. However, a trend toward improvement was seen for all three measures. The distributions of PROMs at all time points are provided in Supplementary Figure S1 online.

Fidelity

Seventy-six patient checklists were completed, representing 78% of the total patient sample. Follow-up plans were recorded for 59 patients (78% of completed checklists). Table 3 shows the time health-care professionals spent delivering the service. Average times are reported as medians with interquartile ranges to account for the skewed nature of the data. Discussion points in the care model were not always addressed, although clinicians were permitted to be flexible in tailoring discussions to the needs of the patient. All elements were used across the consultations, and it was demonstrated that the entire range could be delivered by a team of professionals within a single consultation.

Resource use

Supplementary Table S2 online shows the types of resources used, consistent with using an NHS perspective, over the month prior to completion of the questionnaire. Average usage, and therefore average cost, of accessing community and hospital-based NHS services decreased for patients affected by IRD after receiving the care model. A complete list of non-NHS services accessed by patients is provided in Supplementary Table S3 online.

Discussion

The delivery of genomic counseling and testing within routine mainstream clinical care represents a considerable challenge. This study assessed the fidelity of a standardized care model for patients with IRD and the feasibility of using the selected PROMs and resource-use questionnaire to quantify its impact. A checklist that asked clinicians to capture the elements of the standardized care model they delivered indicated that it could be delivered in a consistent way. This suggests that it is feasible to move forward with this standardized care model and that it may be possible to assess its impact in a future substantive, prospective study.

The ICECAP-A was identified as a potentially useful measure of the impact of the care model. This was because the ICECAP-A had fewer missing responses than the GCOS-24 and had fewer responses with the highest possible score at baseline than the EQ-5D. Although the GCOS-24 was specifically designed for use in the context of a clinical genetics service,24 this study found that GCOS-24 completion rates were lower than the ICECAP-A and EQ-5D and that questions involving reproductive choices and children were often considered not relevant by study participants. A study using qualitative methods would be useful to understand the reasons for this, particularly if answering NA was used as a way to “opt out” because the questions caused concern or worry to the patient. The measure comprises 24 questions, which may also have been problematic in a population of visually impaired individuals. Further research is suggested to explore whether a shortened version of the GCOS-24 would be more suited to use in the context of a trial for patients with IRD. This would require revalidation of the short-form version.

Because the EQ-5D displayed considerable ceiling effects, further empirical work is needed to determine whether it is suitable for use in populations with genetic eye conditions. A five-level version of the EQ-5D has recently been developed to address criticisms regarding responsiveness and ceiling effects.40 The five-level version could potentially offer improvements over the three-level version used in this study. One benefit of the EQ-5D is that, owing to its generic nature, it enables comparisons across populations and health conditions. Although it is unclear whether the three-level EQ-5D is an appropriate measure to capture the effects of a genomic care model, having the data enables these comparisons.

There were decreases in average ICECAP-A scores after 1 month, followed by increases after 3 months. Although the study was not sufficiently powered to assess these changes in terms of statistical significance, the results suggest that benefits of the care model may only accrue after a longer time period. This demonstrates the importance of choosing a suitable time horizon, especially when the intervention may have delayed benefits because of the need for patients to adjust to the diagnosis of an inherited condition.19

To assess fidelity, checklists were completed by the clinicians who delivered the intervention. There was no incentive for an individual clinician to falsify the checklist because they were used to guide the next clinician who saw the patient in the clinic. This method also ensured that clinicians were reminded of the key deliverables of the care model. Our analysis showed that discussion points in the care model were not always addressed. This was not a pressing concern because clinicians were permitted to be flexible in tailoring discussions to the needs of the patient. However, it may have been useful to define minimum acceptable thresholds a priori for the delivery of each discussion point so that clinicians were aware of the importance of each element of the care model and to confirm fidelity. Fidelity was also assessed in video format by independent observers. While being recorded, it is possible that clinicians altered their behavior in anticipation of being evaluated. This bias (often referred to as the Hawthorne effect) could be introduced whenever clinicians are observed, yet it was necessary to use an observer to confirm that fidelity was recorded accurately.

A further potential limitation of the study was that, despite the pre-/post- design, patients were recruited at baseline regardless of whether they were new to the service. This meant that some patients had previously accessed elements of the care model. Baseline results were therefore confounded by previous visits and may not allow for an accurate representation of the true effects of the care model. To capture the long-term benefits of the care model when patients would start to receive the care model at the first visit, the recruitment of only new patients to a future study would be appropriate.

The care model was delivered in only one center, which may raise concerns regarding the external validity of the results. It is also possible that clinical geneticists could perform the same role as genetic counselors in the delivery of the care model. By providing other centers with the care model in a replicable (manualized) format, it is expected that future results would be similar elsewhere.

In conclusion, this study provides evidence to support the fidelity of a standardized care model for patients with IRD in one center. It is suggested that a future study should only recruit new patients to identify the impact of the new model of care. The ICECAP-A was shown to be potentially useful in this context. A genetics service–specific measure was found to require some adaptation for use in a future study. The key items of resource use from the NHS perspective were identified. A larger sample size would be required to detect statistically significant changes in a definitive study. The relevant follow-up period for a study assessing the impact of a care model that focusses on achieving a genetic-based diagnosis should be sufficiently long and at least 3 months. The findings from this study can be used to inform the design of a future definitive study to assess the clinical effectiveness and cost-effectiveness of a standardized care model for IRD within the context of mainstream ophthalmic care.

Disclosure

The authors declare no conflict of interest.

References

Vaxillaire M, Froguel P. Monogenic diabetes: Implementation of translational genomic research towards precision medicine. J Diabetes 2016;8:782–795.

Tarailo-Graovac M, Shyr C, Ross CJ, et al. Exome Sequencing and the Management of Neurometabolic Disorders. N Engl J Med 2016;374:2246–2255.

Green RC, Goddard KA, Jarvik GP, et al.; CSER Consortium. Clinical Sequencing Exploratory Research Consortium: Accelerating Evidence-Based Practice of Genomic Medicine. Am J Hum Genet 2016;98:1051–1066.

Lazaridis KN, Schahl KA, Cousin MA, et al.; Individualized Medicine Clinic Members. Outcome of whole exome sequencing for diagnostic Odyssey cases of an individualized medicine clinic: the Mayo Clinic experience. Mayo Clin Proc 2016;91:297–307.

Eden M, Payne K, Jones C, et al. Identifying variation in models of care for the genomic-based diagnosis of inherited retinal dystrophies in the United Kingdom. Eye (Lond) 2016;30:966–971.

Kingsmore SF, Petrikin J, Willig LK, Guest E. Emergency medical genomes: a breakthrough application of precision medicine. Genome Med 2015;7:82.

Mallett A, Corney C, McCarthy H, Alexander SI, Healy H. Genomics in the renal clinic - translating nephrogenetics for clinical practice. Hum Genomics 2015;9:13.

Battista RN, Blancquaert I, Laberge AM, van Schendel N, Leduc N. Genetics in health care: an overview of current and emerging models. Public Health Genomics 2012;15:34–45.

Mason-Suares H, Sweetser DA, Lindeman NI, Morton CC. Training the future leaders in personalized medicine. J Pers Med 2016;6:1.

van Nimwegen KJ, Schieving JH, Willemsen MA, et al. The diagnostic pathway in complex paediatric neurology: a cost analysis. Eur J Paediatr Neurol 2015;19:233–239.

Liew G, Michaelides M, Bunce C. A comparison of the causes of blindness certifications in England and Wales in working age adults (16–64years), 1999–2000 with 2009–2010. BMJ Open 2014;4:e004015.

Solebo AL, Rahi J. Epidemiology, aetiology and management of visual impairment in children. Arch Dis Child 2014;99:375–379.

Black G. Genetics for Ophthalmologists: The Molecular Genetic Basis of Ophthalmic Disorders. Remedica; London, 2002.

RP Fighting Blindness. RP Fighting Blindness—Research. 2015. http://www.rpfightingblindness.org.uk/index.php?tln=research. Accessed 22 October 2015.

Harrison M, Birch S, Eden M, et al. Variation in healthcare services for specialist genetic testing and implications for planning genetic services: the example of inherited retinal dystrophy in the English NHS. J Community Genet 2015;6:157–165.

Campbell M, Fitzpatrick R, Haines A, et al. Framework for design and evaluation of complex interventions to improve health. BMJ 2000;321:694–696.

Richards DA, Hallberg I. Complex Interventions in Health: An Overview of Research Methods. Routledge: Abingdon, UK: 2015.

Combs R, McAllister M, Payne K, et al. Understanding the impact of genetic testing for inherited retinal dystrophy. Eur J Hum Genet 2013;21:1209–1213.

Combs R, Hall G, Payne K, et al. Understanding the expectations of patients with inherited retinal dystrophies. Br J Ophthalmol 2013;97:1057–1061.

W.K. Kellogg Foundation. W.K. Kellogg Foundation Logic Model Development Guide. 2004. https://www.wkkf.org/resource-directory/resource/2006/02/wk-kellogg-foundation-logic-model-development-guide. Accessed 30 December 2015.

McAllister M, Payne K, Nicholls S, MacLeod R, Donnai D, Davies LM. Improving service evaluation in clinical genetics: identifying effects of genetic diseases on individuals and families. J Genet Couns 2007;16:71–83.

Payne K, Nicholls S, McAllister M, Macleod R, Donnai D, Davies LM. Outcome measurement in clinical genetics services: a systematic review of validated measures. Value Health 2008;11:497–508.

Payne K, McAllister M, Davies LM. Valuing the economic benefits of complex interventions: when maximising health is not sufficient. Health Econ 2013;22:258–271.

McAllister M, Wood AM, Dunn G, Shiloh S, Todd C. The Genetic Counseling Outcome Scale: a new patient-reported outcome measure for clinical genetics services. Clin Genet 2011;79:413–424.

Al-Janabi H, Flynn TN, Coast J. Development of a self-report measure of capability wellbeing for adults: the ICECAP-A. Qual Life Res 2012;21:167–176.

Sen A. Capability and well-being. In: Sen N. The Quality of Life. Oxford: Clarendon Press; 1993. pp. 30–39.

Flynn TN, Huynh E, Peters TJ, et al. Scoring the Icecap-a capability instrument. Estimation of a UK general population tariff. Health Econ 2015;24:258–269.

Al-Janabi H, Peters TJ, Brazier J, et al. An investigation of the construct validity of the ICECAP-A capability measure. Qual Life Res 2013;22:1831–1840.

Rabin R, de Charro F. EQ-5D: a measure of health status from the EuroQol Group. Ann Med 2001;33:337–343.

NICE. Guide to the Methods of Technology Appraisal 2013. 2013. http://www.nice.org.uk/article/pmg9/resources/non-guidance-guide-to-the-methods-of-technology-appraisal-2013-pdf. Accessed 29 July 2015.

Dolan P. Modeling valuations for EuroQol health states. Med Care 1997;35:1095–1108.

Beecham J, Knapp M. Client Service Receipt Inventory. http://dirum.org/instruments/details/44. Accessed 22 October 2015.

Kraemer HC, Mintz J, Noda A, Tinklenberg J, Yesavage JA. Caution regarding the use of pilot studies to guide power calculations for study proposals. Arch Gen Psychiatry 2006;63:484–489.

McHorney CA, Tarlov AR. Individual-patient monitoring in clinical practice: are available health status surveys adequate? Qual Life Res 1995;4:293–307.

Lim CR, Harris K, Dawson J, Beard DJ, Fitzpatrick R, Price AJ. Floor and ceiling effects in the OHS: an analysis of the NHS PROMs data set. BMJ Open 2015;5:e007765.

White IR, Carlin JB. Bias and efficiency of multiple imputation compared with complete-case analysis for missing covariate values. Stat Med 2010;29:2920–2931.

Stata. [MI] Multiple Imputation—Stata. 2013. http://www.stata.com/manuals13/mimiestimate.pdf. Accessed 2 January 2015.

Department of Health. National Schedule of Reference Costs: The Main Schedule. 2014. https://www.gov.uk/government/publications/nhs-reference-costs-2013-to-2014 Accessed 2 January 2015.

Curtis L. Unit Costs of Health and Social Care 2014. PSSRU. 2014. http://www.pssru.ac.uk/project-pages/unit-costs/2014/index.php. Accessed 5 January 2015.

Janssen MF, Pickard AS, Golicki D, et al. Measurement properties of the EQ-5D-5L compared to the EQ-5D-3L across eight patient groups: a multi-country study. Qual Life Res 2013;22:1717–1727.

Acknowledgements

We thank Cheryl Jones (Manchester Centre for Health Economics, University of Manchester) for help conducting telephone interviews. We also thank the patients who consented to participate in the study. This study was supported by Fight for Sight (grant 1801) as part of its program of research for the prevention and treatment of blindness and eye disease. The funding organization had no role in the design or conduct of the study, analysis of the data, or preparation of the manuscript. N.D. received a National Institute for Health Research (NIHR) Research Methods Fellowship to contribute to the study.

Author information

Authors and Affiliations

Corresponding author

Supplementary information

Supplementary Tables and Figure

(DOCX 106 kb)

Rights and permissions

About this article

Cite this article

Davison, N., Payne, K., Eden, M. et al. Exploring the feasibility of delivering standardized genomic care using ophthalmology as an example. Genet Med 19, 1032–1039 (2017). https://doi.org/10.1038/gim.2017.9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/gim.2017.9

Keywords

This article is cited by

-

The estimation of health state utility values in rare diseases: do the approaches in submissions for NICE technology appraisals reflect the existing literature? A scoping review

The European Journal of Health Economics (2023)

-

Using Rasch measurement theory to explore the fitness for purpose of the genetic counseling outcome scale: a tale of two scales

Quality of Life Research (2023)

-

Evaluating the Outcomes Associated with Genomic Sequencing: A Roadmap for Future Research

PharmacoEconomics - Open (2019)